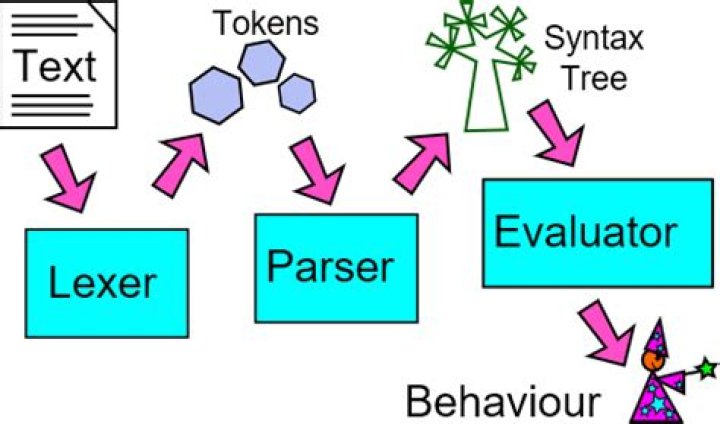

What does a Lexer do

The lexer just turns the meaningless string into a flat list of things like “number literal”, “string literal”, “identifier”, or “operator”, and can do things like recognizing reserved identifiers (“keywords”) and discarding whitespace. Formally, a lexer recognizes some set of Regular languages.

What is the purpose of a lexer?

A lexer will take an input character stream and convert it into tokens. This can be used for a variety of purposes. You could apply transformations to the lexemes for simple text processing and manipulation. Or the stream of lexemes can be fed to a parser which will convert it into a parser tree.

What are lexer rules?

Rules defined within a lexer grammar must have a name beginning with an uppercase letter. These rules implicitly match characters on the input stream instead of tokens on the token stream. Referenced grammar elements include token references (implicit lexer rule references), characters, and strings.

Is a lexer necessary?

Structure of a Parser A complete parser is usually composed of two parts: a lexer, also known as scanner or tokenizer, and the proper parser. The parser needs the lexer because it does not work directly on the text, but on the output produced by the lexer.What is a lexer in C?

Summary. Lexer is used to pre-process the source code, so as to reduce the complexity of parser. Lexer is also a kind of compiler which consumes source code and output token stream. lookahead(k) is used to fully determine the meaning of current character/token.

How are tokens recognized?

The terminals of the grammar, which are if, then, else, relop, id, and number, are the names of tokens as far as the lexical analyzer is concerned. … For this language, the lexical analyzer will recognize the keywords if, then, and e l s e , as well as lexemes that match the patterns for relop, id, and number.

What is Golang lexer?

If you are looking to write a golang lexer or a lexer in golang this article is for you. A lexer is a software component that analyzes a string and breaks it up into its component parts. … For natural languages (such as English) lexical analysis can be difficult to do automatically but is usually easy for a human to do.

What's the difference between parser and lexer?

A parser goes one level further than the lexer and takes the tokens produced by the lexer and tries to determine if proper sentences have been formed. Parsers work at the grammatical level, lexers work at the word level.What is the benefit of using a lexer before a parser?

The iterator exposed by the lexer buffers the last emitted tokens. This significantly speeds up parsing of grammars which require backtracking. The tokens created at runtime can carry arbitrary token specific data items which are available from the parser as attributes.

What is lexer and parser and interpreter?A lexer is the part of an interpreter that turns a sequence of characters (plain text) into a sequence of tokens. A parser, in turn, takes a sequence of tokens and produces an abstract syntax tree (AST) of a language. The rules by which a parser operates are usually specified by a formal grammar.

Article first time published onWhich one is a lexer generator?

Which one is a lexer Generator? Explanation: ANTLR – Can generate lexical analyzers and parsers.

What is lexer and parser in ANTLR?

ANTLR or ANother Tool for Language Recognition is a lexer and parser generator aimed at building and walking parse trees. It makes it effortless to parse nontrivial text inputs such as a programming language syntax.

What is lexer in Python?

All you need can be found inside the pygments. lexer module. As you can read in the API documentation, a lexer is a class that is initialized with some keyword arguments (the lexer options) and that provides a get_tokens_unprocessed() method which is given a string or unicode object with the data to parse.

What is the use of lexical analyzer?

Lexical analysis is the first phase of a compiler. It takes modified source code from language preprocessors that are written in the form of sentences. The lexical analyzer breaks these syntaxes into a series of tokens, by removing any whitespace or comments in the source code.

What is lexical analysis?

Lexical Analysis is the first phase of the compiler also known as a scanner. It converts the High level input program into a sequence of Tokens. Lexical Analysis can be implemented with the Deterministic finite Automata.

For which phase of compilation is Yacc used?

YACC is a program designed to compile a LALR (1) grammar. It is used to produce the source code of the syntactic analyzer of the language produced by LALR (1) grammar. The input of YACC is the rule or grammar and the output is a C program.

How do you make a Lexer in Python?

- Library import & Token definition: import ply.lex as lex #library import # List of token names. …

- Define regular expression rules for simple tokens: Ply uses the re Python library to find regex matches for tokenization.

What is token specification?

There are 3 specifications of tokens: 1)Strings 2) Language 3)Regular expression.

How regular expressions are used in token specification?

The lexical analyzer needs to scan and identify only a finite set of valid string/token/lexeme that belong to the language in hand. … Regular expressions have the capability to express finite languages by defining a pattern for finite strings of symbols. So regular expressions are used in token specification.

What is the use of tokens in compiler design?

The token name is an abstract symbol representing a kind of lexical unit, e.g., a particular keyword, or sequence of input characters denoting an identifier. The token names are the input symbols that the parser processes. A pattern is a description of the form that the lexemes of a token may take.

How does Lexer and parser communicate?

- The lexer eagerly converts the entire input string into a vector of tokens. …

- Each time the lexer finds a token, it invokes a function on the parser, passing the current token. …

- Each time the parser needs a token, it asks the lexer for the next one.

What does parser do with tokens?

A parser is a compiler or interpreter component that breaks data into smaller elements for easy translation into another language. A parser takes input in the form of a sequence of tokens, interactive commands, or program instructions and breaks them up into parts that can be used by other components in programming.

Is a Lexer part of a parser?

Lexers and parsers are not very different, as suggested by the accepted answer. Both are based on simple language formalisms: regular languages for lexers and, almost always, context-free (CF) languages for parsers.

What is C++ Lexer?

Description. The C++ Lexer Toolkit Library (LexerTk) is a simple to use, easy to integrate and extremely fast lexicographical generator – lexer. The tokens generated by the lexer can be used as input to a parser such as “ExprTk”.

Why is parsing important?

Syntactic parsing, the process of obtaining the internal structure of sentences in natural languages, is a crucial task for artificial intelligence applications that need to extract meaning from natural language text or speech.

How do you create an interpreter in C++?

If you don’t. Or if you don’t and you’re really agitated about it. Do not worry. If you stick around and work through the series and build an interpreter and a compiler with me you will know how they work in the end.

What is a compiler and interpreter?

Compliers and interpreters are programs that help convert the high level language (Source Code) into machine codes to be understood by the computers. … Compiler scans the entire program and translates the whole of it into machine code at once. An interpreter takes very less time to analyze the source code.

How do you create an interpreter in Java?

You have to specify the language’s syntax rules and you have to write some additional logic in Java, implementing semantics of your script language. If you want to make an interpreter, the Java code you write, will generate further Java (or any) code.

What does a top down parser generates?

Top-down parser is the parser which generates parse for the given input string with the help of grammar productions by expanding the non-terminals i.e. it starts from the start symbol and ends on the terminals. It uses left most derivation.

What is the use of compiler?

compiler, computer software that translates (compiles) source code written in a high-level language (e.g., C++) into a set of machine-language instructions that can be understood by a digital computer’s CPU. … Other compilers generate machine language directly.

Which one is a type of lexeme?

Que.Which one is a type of Lexeme ?b.Constantsc.Keywordsd.All of the mentionedAnswer:All of the mentioned